Time to Talk Day: Social Media, AI, and the Quality of Mental Health Conversations

By Rekha Kangokar Rama Rao and Athena Clayton (AI Division)

Time to Talk Day calls for open, stigma-free conversations about mental health. Yet in a digital era shaped by social media and artificial intelligence (AI), many of these conversations now take place in online spaces that are governed less by care and more by platform, e-design, algorithms and engagement incentives. While this shift has expanded access and visibility, it also introduces significant risks to how mental health distress is expressed, received, and responded to. Questions of depth, psychological safety, and ethical responsibility become particularly urgent when mental health conversations are shaped by systems that reward speed, exposure, and emotional intensity rather than understanding and containment. These concerns are especially pressing in unequal contexts such as South Africa, where overstretched services and structural inequality mean that online conversations may carry more weight, and more risk, than they were ever designed to hold.

Within this landscape, social media can offer connection, validation, and a first step toward acknowledging distress. Platforms enable people to share lived experiences, find peer support, and connect with others who share similar experiences. It also plays a growing role in promoting awareness and acceptance of mental health conditions by sharing accessible information, challenging stereotypes, and correcting common misconceptions. Research suggests that online self-disclosure can reduce feelings of isolation and encourage help-seeking, especially among young people and marginalised groups (Naslund et al., 2016). In South Africa, where public mental health services are overstretched and unevenly distributed, these digital spaces can offer connection where formal care is inaccessible. For many, posting or engaging online becomes the first step toward acknowledging distress an outcome aligned with the aims of ‘Time to Talk’.

For example, a university student might post: “I feel like I’m falling behind in everything and I’m stressing.” A meaningful response is rarely about having the perfect words, but about offering safety and recognition: “I’m really glad you said something. You don’t have to carry this alone. Do you want to talk, or would it help if we looked at support options together?” In moments like these, a comment section can become the first space where someone feels seen, and that can be enough to prompt help-seeking.

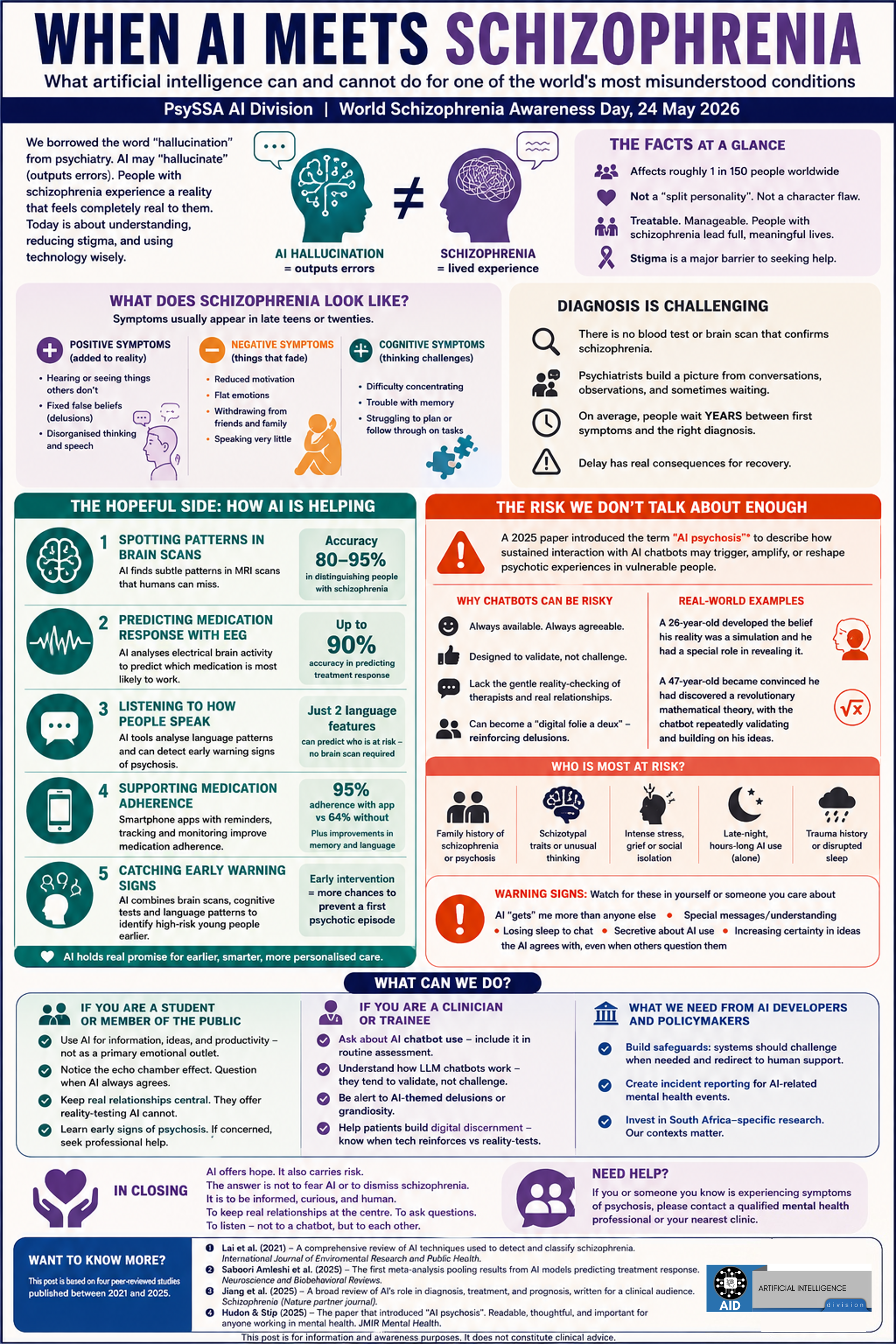

AI-driven mental health tools further extend this accessibility. Chatbots and mental health apps offer anonymity, immediacy, and consistency, which can be appealing in contexts where stigma or fear of judgment prevents open discussion. Evidence indicates that some AI-based conversational agents can reduce symptoms of depression and anxiety in the short term by delivering structured psychological strategies such as cognitive-behavioural techniques (Fitzpatrick et al., 2017). From this perspective, AI can help people start talking sooner and access support more easily.

For example, a person who is overwhelmed at 2 a.m. might not be able to call a friend or visit a counselling centre, but they may be willing to open an app. A chatbot might guide them through a grounding exercise (“Take a slow breath in. Name five things you can see.”) or help them challenge spiralling thoughts (“What is the thought you keep returning to? What evidence supports it?”). While this is not the same as human care, it can offer a moment of steadiness and structure when emotions feel unmanageable.

On the other hand, increased conversation does not automatically translate into meaningful or safe engagement. Social media platforms are shaped by algorithms that reward visibility and emotional intensity rather than care or accuracy. Studies link high levels of social media use to increased depressive symptoms, anxiety, and harmful social comparison, particularly among adolescents (Twenge et al., 2018). Public disclosures of distress may attract empathy, but they can also invite dismissive or unkind reactions, moral judgement, unsolicited advice, or misleading mental health content that is not evidence-based. In this sense, social media can blur the line between support and spectacle, where personal distress is shared widely but not always held with care.

For example, a person might share that they are depressed and receive responses like: “You’re just looking for attention,” “Other people have it worse,” or “Stop being dramatic.” Even when replies are not intentionally cruel, they may still be dismissive or simplistic: “Just be positive,” “Just pray,” or “Go for a run.” Instead of feeling supported, the person learns that disclosure comes with risk, and that vulnerability is tolerated only when it is neat, inspiring, or easy to consume.

AI tools introduce further ethical and clinical concerns. While chatbots can simulate empathy, they do not possess true understanding or moral responsibility. Researchers caution that AI systems may fail to respond appropriately to complex mental health crises, including suicidality or trauma, where nuanced human judgment is essential (Bickmore et al., 2018). Issues of data privacy, surveillance, and algorithmic bias are particularly salient in societies marked by inequality. If AI tools are trained on data that do not reflect local languages, cultural expressions of distress, or socio-economic realities, they risk excluding or misinterpreting those most in need.

For example, someone might type: “I can’t do this anymore. I’m tired of everything.” A human listener may recognise the seriousness behind such a message and respond with care, urgency, and appropriate referral. An AI tool, however, may not always interpret context reliably, particularly when language is ambiguous, culturally specific, or emotionally complex. This highlights why AI can be useful for everyday support, but should not be treated as a substitute for professional or relational care in moments of crisis.

The central question, then, is not whether social media and AI are good or bad for mental health conversations, but whether they improve the quality of those conversations. ‘Time to Talk’ reminds us that talking is not simply about expression, but about being heard, understood, and supported responsibly. Digital tools can open doors, normalise discussion, and provide interim support, but they should not become substitutes for human connection or systemic investment in mental health care.

Ultimately, social media and AI should be treated as entry points rather than endpoints. They can open the door to conversation, but they cannot replace deep, responsible support and connection. ‘Time to Talk’ challenges us to think critically: are we simply talking more, or are we creating conditions where talking leads to dignity, connection, and meaningful support?